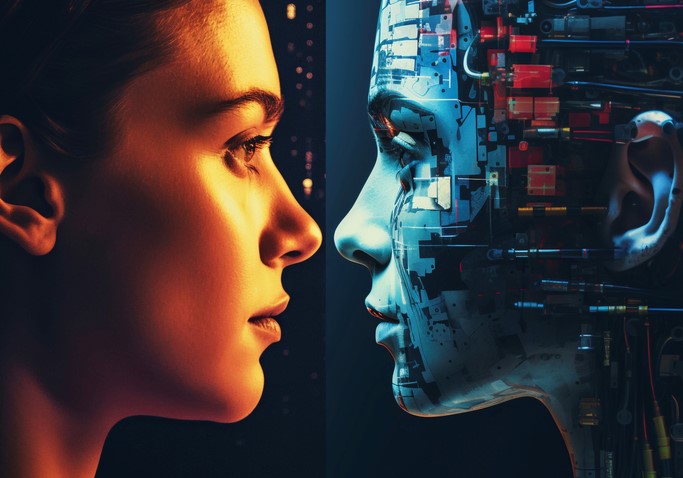

Imagine receiving a phone call from your grandmother. The cadence of her voice, that specific way she laughs, and even her subtle regional accent are all there. She tells you she’s lost her voice due to a recent illness, but thanks to a small device, she can still “speak” to you in her own tone just by typing. This isn’t a scene from a sci-fi movie; it’s a reality we are building today.

As someone who has spent over a decade navigating the corridors of HealthTech and software development, I’ve watched AI generated voices evolve from the “robotic stutter” of early GPS systems to something so eerily human that it can pass the “Turing Test” of the ear. We are no longer just making machines talk; we are giving them a soul—or at least, a very convincing digital mimicry of one.

From Robotics to Realism: How Does It Sound So Real?

In my early years as a tech writer, “Text-to-Speech” (TTS) was a frustrating experience. It was clunky and devoid of emotion because it used a method called “Concatenative Synthesis.” Think of it like a ransom note made of magazine clippings; the computer would stitch together tiny fragments of recorded human speech. It worked, but it sounded like a ghost trapped in a microwave.

Today, AI generated voices use Neural Networks and Deep Learning. To explain this simply, imagine a talented impressionist. An impressionist doesn’t just repeat words; they study the breath, the rhythm, and the pitch of a person.

Modern AI does exactly this through Neural TTS. It analyzes thousands of hours of human speech to understand the “prosody”—the patterns of stress and intonation in a language. It doesn’t “play back” recordings; it predicts what the next sound wave should look like based on the context of the sentence.

The Tech Stack: The Engine Behind the Voice

If you’re curious about the “how,” it boils down to two main components that I often encounter in the development of healthcare communication apps:

1. The Text Analysis Module

This is the “brain.” It looks at the text and decides if a word is a noun or a verb (think of the word “read”—is it past or present tense?). It identifies punctuation to know when to “take a breath.”

2. The Neural Vocoder

This is the “vocal cords.” This part of the AI generated voices architecture takes the abstract data from the analysis module and converts it into actual audio waves. Technologies like WaveNet or Tacotron have been the pioneers here, creating smooth, high-fidelity sound that lacks the metallic “buzz” of the past.

Revolutionizing Industries: It’s Not Just for Siri Anymore

While most people encounter AI voices through virtual assistants, my experience in the field has shown me much deeper applications that are transforming how we work and live.

Healthcare: Restoring the Gift of Speech

In the HealthTech niche, we use Voice Cloning for patients with ALS or those undergoing laryngectomies. By recording their voice before they lose it, we can create a permanent digital clone. This allows them to communicate with their loved ones using their own unique identity, preserving dignity in a way that “Stephen Hawking-style” voices never could.

Content Creation and Dubbing

The creative industry is undergoing a massive shift. I recently saw a demo where a video was translated from English to Spanish. Not only was the voice an AI generated voice that matched the original actor, but the AI also adjusted the “lip-sync” to match the new language. This is a game-changer for global education and entertainment.

Personalized Customer Experience

Imagine a bank where the AI voice on the phone recognizes your mood. If you sound frustrated, the AI lowers its pitch and adopts a “calming” tone. This Emotionally Intelligent AI is the new frontier of customer service.

Pro Tips: How to Spot (and Create) Quality AI Audio

Whether you are looking to use these tools for your brand or just trying to navigate a world full of deepfakes, here is some “Expert Advice” from the trenches:

Tips Pro: The “Breath” Test

When choosing an AI generated voices platform, listen for the “inhales.” High-quality AI now includes subtle, non-verbal sounds like tiny breaths or the click of a tongue. If the voice is a constant stream of sound without pauses for air, it will fatigue the listener’s ear within minutes.

Hidden Warning: The Ethics of Cloning

Never clone a voice without explicit, documented consent. In the tech industry, we are seeing a rise in “Voice Phishing” where AI mimics a CEO or family member to steal data. Always use platforms that have built-in watermarking and strict “Identity Verification” protocols.

Making It Scannable: Why Now?

Why is AI generated voices technology exploding right now? A few key factors:

-

Computational Power: We finally have the GPU strength to run these complex neural models in real-time.

-

Data Availability: The sheer volume of high-quality audio online has provided the perfect “training ground” for AI.

-

Accessibility: You no longer need a Ph.D. in Data Science. Tools like ElevenLabs, Play.ht, and Murf.ai allow anyone to generate professional audio in seconds.

The “Uncanny Valley” of Sound

We’ve talked about the “Uncanny Valley” in visuals, but it exists in audio too. This is the point where a voice sounds too human, yet something is slightly “off,” triggering a sense of unease.

I’ve found that the best AI voices actually embrace a bit of imperfection. We call this Stochasticity. By adding a tiny bit of random variation—the kind humans have naturally—the AI moves past the “creepy” phase and becomes genuinely pleasant to listen to for long periods, like in an audiobook.

Conclusion: A Symphony of Silicon and Soul

The era of the “Robot Voice” is officially dead. AI generated voices are paving the way for a more inclusive, efficient, and personalized world. From helping a patient find their voice again to allowing a small creator to produce a Hollywood-level documentary, the barriers are falling.

However, as we embrace this future, we must remain the “conductors” of this digital symphony. Technology provides the instrument, but human ethics and creativity must provide the melody.

What do you think? If you could “save” your voice in a digital vault for your grandchildren to hear 50 years from now, would you do it? Or does the idea of a digital voice living on feel a bit too strange?

Share your thoughts in the comments below—I’d love to hear your perspective on this vocal revolution!